Hadoop has been processing large data sets for around of 10 years now and is considered as the best big data processing technology. Now the question is why Apache Spark came into Evolution? Let’s Understand this. It is one of the Hadoop’s sub project which was open sourced in 2010 under a BSD license. Spark was developed in 2009 in UC Berkeley’s AMPLab by Matei Zaharia. This might have clear some air about What is Spark Apache? Let’s study how it came into existence and whether is it the replacement of Hadoop MapReduce or not? Evolution of Apache Spark Yes, Spark uses Hadoop in two possible ways i.e Storage and Processing, as already mentioned that spark has its own cluster management so Spark uses Hadoop for Storage purposes only. Spark has its own cluster management computation. Many people think that Spark is not a extension or modified version of Hadoop which is not true at all. 100x faster than Hadoop fast.” Well, all these terms signifies one common thing i.e Spark has reduced the time between queries and waiting time to run the prgram which enables it to run much faster than the Hadoop.

You might have heard “Apache Spark is an open source big data processing framework built around speed, ease of use, and sophisticated analytics” or “Spark is as a VERY fast in-memory, data-processing framework – like lightning fast. There are many definitions of spark revolving around the internet.

DO WE NEED TO INSTALL APACHE SPARK SOFTWARE

Spark was introduced by Apache Software Foundation for speeding up the Hadoop computational computing software process.

DO WE NEED TO INSTALL APACHE SPARK HOW TO

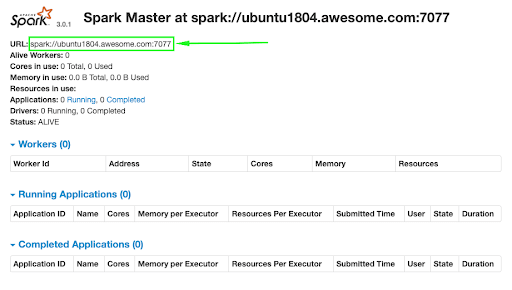

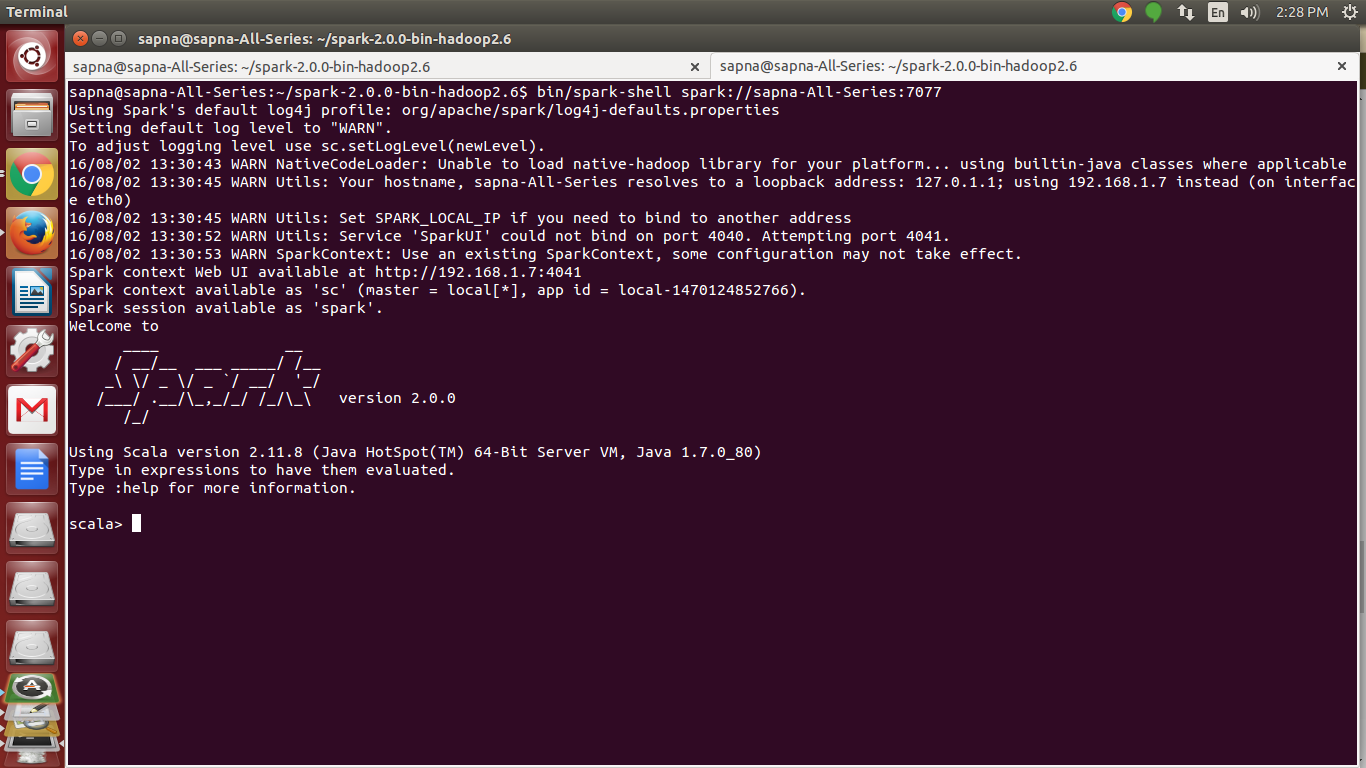

Through this post we are going to look into the various insights details of Apache Spark such as the definition of Apache spark, how it came into existence, features and finally how to install spark successfully. We’ve already discussed about Haddop in detail in our previous posts. To analyze the large data sets, most of the industries are using Hadoop. For additional help or useful information, we recommend you to check the official Apache Spark web site.What is Apache Spark & How to Install Spark? Blog: GestiSoft Thanks for using this tutorial for installing Apache Spark on CentOS 7 systems. Open your favorite browser and navigate to or and complete the required the steps to finish the installation.Ĭongratulation’s! You have successfully installed Apache Spark on CentOS 7. firewall-cmd -permanent -zone=public -add-port=6066/tcpįirewall-cmd -permanent -zone=public -add-port=7077/tcpįirewall-cmd -permanent -zone=public -add-port=8080-8081/tcpĪpache Spark will be available on HTTP port 7077 by default. For testing we can run master and slave daemons on the same machine. executing the start script on each node, or simple using the available launch scripts. The standalone Spark cluster can be started manually i.e. bash_profileĮcho 'export PATH=$PATH:$SPARK_HOME/bin' >. bash_profileĮcho 'export SPARK_HOME=$HOME/spark-1.6.0-bin-hadoop2.6' >. Setup some Environment variables before you start spark: echo 'export PATH=$PATH:/usr/lib/scala/bin' >. Install Apache Spark using following command: wget Įxport SPARK_HOME=$HOME/spark-2.2.1-bin-hadoop2.7 Once installed, check scala version: scala -version Sudo ln -s /usr/lib/scala-2.10.1 /usr/lib/scala Spark installs Scala during the installation process, so we just need to make sure that Java and Python are present: wget Once installed, check java version: java -version Installing java for requirement install apache spark: yum install java -y First let’s start by ensuring your system is up-to-date. I will show you through the step by step install Apache Spark on CentOS 7 server.

The installation is quite simple and assumes you are running in the root account, if not you may need to add ‘sudo’ to the commands to get root privileges. This article assumes you have at least basic knowledge of Linux, know how to use the shell, and most importantly, you host your site on your own VPS. It also supports a rich set of higher-level tools including Spark SQL for SQL and structured information processing, MLlib for machine learning, GraphX for graph processing, and Spark Streaming.

It provides high-level APIs in Java, Scala and Python, and also an optimized engine which supports overall execution charts. Apache Spark is a fast and general-purpose cluster computing system.